In 2013, I discovered the Rust programming language and quickly decided to learn it and make it my main programming language.

In 2017, I moved to Berlin and joined Parity as a Rust developer. The task that occupied my first few months was to build rust-libp2p, a peer-to-peer library in asynchronous Rust (~89k lines of code at the moment). Afterwards, I integrated it in Substrate (~400k lines of code), and have since then been the maintainer of the networking part of the code base.

In the light of this recent blog post and this twitter interaction, I thought it could be a good idea to lay down some of the issues I’ve encountered over time through experience.

Please note that I’m not writing this article on behalf of my employer Parity. This is my personal feedback when working on the most asynchronous-heavy parts of Parity-related projects, but I didn’t show it to anyone before publishing and it might differ from what the other developers in the company would have to say.

General introduction

I feel obliged to add an introduction paragraph, given the discussions and controversies that have happened around asynchronous Rust recently and over time.

First and foremost, I must say that asynchronous Rust is generally in a really good shape. This article is addressed mainly towards programmers that are already familiar with asynchronous Rust, as a way to give my opinion on the ways the design could be improved.

If you’re not a Rust programmer and you’re reading this article to get an idea of whether or not you should use Rust for an asynchronous project, don’t get the wrong idea here: I’m strongly advocating for Rust, and no other language that I’m aware of comes close.

I didn’t title this article “Problems in asynchronous Rust”, even though it’s focusing on problems, again to not give the wrong idea.

The years during which asynchronous Rust has been built have seen lots of tensions in the community. I’m very respectful of the people who have spent their energy debating and handling the overwhelmingly massive flow of opinions. I’ve been personally been aside from the Rust community in the last 4–5 years for this exact reason, and have zero criticism to address here.

I won’t focus too much on the past (futures 0.1 and 0.3) but more on the current state of things, since the objective of this feedback is ultimately to drive things forward.

Now that this is all laid out, let’s go for the problematic topics.

Future cancelling problem

I’m going to start with what I think is the most problematic issue in asynchronous Rust at the moment: knowing whether a future can be deleted without introducing a bug.

I’m going to illustrate this with an example: you write an asynchronous function that reads data from a file, parses it, then sends the items over a channel. For example (pseudo-code):

async fn read_send(file: &mut File, channel: &mut Sender<...>) {

loop {

let data = read_next(file).await;

let items = parse(&data);

for item in items {

channel.send(item).await;

}

}

}Each await point in asynchronous code represents a moment when execution might be interrupted, and control given back to the user of this future. This user can, at their will, decide to drop the future at this point, stopping its execution altogether.

If the user calls the read_send function, then polls it until it reaches the second await point (sending on a channel), then destroys the read_send future, all the local variables (data, items, and item) are silently dropped. By doing so, the user will have extracted data from file, but without sending this data on channel. This data is simply lost.

You might wonder: why would the user do this? Why would the user poll a future for a bit, then destroy it before it has finished? Well, this is exactly what the futures::select! macro might do.

let mut file = ...;

let mut channel = ...;

loop {

futures::select! {

_ => read_send(&mut file, &mut channel) => {},

some_data => socket.read_packet() => {

// ...

}

}

}In this second code snippet, the user calls read_send, polls it, but if socket receives a packet, then the read_send future is destroyed and recreated at the next iteration of the loop. As explained, this can lead to data being extracted from the file but not being sent on the channel. This is likely not what the user wants to do here.

Let’s be clear: this is all working as designed. The problem is not so much what happens, but the fact that what happens isn’t what the user intended. One can imagine situations where the user simply wants read_send to stop altogether, and such situations should be allowed as well. The problem is that this is not what we want here.

There exists four solutions to this problem that I can see: (I’m only providing links to playground instead of explaining in details, for the sake of not making this article too heavy)

- Rewrite the

select!to not destroy the future. Example. This is arguably the best solution in that specific situation, but it can sometimes introduce a lot of complexity, for example if you want to re-create the future with a differentFilewhen the socket receives a message. - Ensure that

read_sendreads and sends atomically. Example. In my opinion the best overall solution, but this isn’t always possible or would introduce an overhead in complex situations. - Change the API of

read_sendand avoid any local variable across a yield point. Example. Real world example. This is also a good solution, but it can be hard to write such code, as it starts to become dangerously close to manually-written futures. - Don’t use

select!and spawn a background task to do the reading. Use a channel to communicate with the background task if necessary, as pulling items from channels is cancellation-resilient. Example. Often the best solution, but adds latency and makes it impossible to accessfileandchannelever again.

It is always possible to solve this problem in some way, but what I would like to highlight is an even bigger problem: these kind of cancellation issues are hard to spot and debug. In the problematic example, all you will observe is that in some rare occasions some parts of the file seem to be skipped. There will not be any panic or any clear indication of where the problem could come from. This is the worst kind of bugs you can encounter.

It is even more problematic when you have more than one developer working on some code base. One developer might think that a future is cancellation-safe while it is not. Documentation might be obsolete. A developer might refactor some future implementation and accidentally make it cancellation-unsafe, or refactor the select! part of the code to destroy the future at a different timing than it did before. Writing unit tests to make sure that a future works properly after being destroyed and rebuilt is more than tedious.

I generally give the following guidelines: if you know for sure that your asynchronous code will be spawned as a background task, feel free to do whatever you want. If you aren’t sure, make it cancellation-safe. If it’s too hard to do so, refactor your code to spawn a background task. These guidelines have in mind the fact that the implementation of a future and its users will likely be two different developers, which is a situation often ignored in small examples.

As for improving the Rust language itself, I unfortunately don’t have any concrete solution in mind. A clippy lint that forbids local variables across yield points has been suggested, but it probably couldn’t detect when a task is simply spawned in a long-lived events loop. One could maybe imagine some InterruptibleFuture trait required by select!, but it would likely hurt the approachability of asynchronous Rust even more.

The Send trait isn’t what it means anymore

The Send trait, in Rust, means that the type it is implemented on can be moved from a thread to another. Most of the types that you manipulate in your day-to-day coding do implement this trait: String, Vec, integers, etc. It is actually easier to enumerate the types that do not implement Send, and one such example is Rc.

Types that do not implement the Send trait are generally faster alternatives. An Rc is the same as an Arc, except faster. A RefCell is the same as a Mutex, except faster. This gives the programmer the possibility to optimize when they know that what they are doing is scoped to a single thread.

Asynchronous functions, however, kind of broke this idea.

Imagine that the function below runs in a background thread, and you want to rewrite it to asynchronous Rust:

fn background_task() {

let rc = std::rc::Rc::new(5);

let rc2 = rc.clone();

bar();

}You might be tempted to just add async and await here and there:

async fn background_task() {

let rc = std::rc::Rc::new(5);

let rc2 = rc.clone();

bar().await;

}But as soon as you try to spawn background_task() in an events loop, you will hit a wall because the future returned by background_task() doesn’t implement Send. The future itself will likely jump between threads, hence the requirement. But in theory, this code is completely sound. As long as the Rc never leaves the task, we are sure that its clones can only ever be cloned or destroyed one at a time, which is where the potential unsafety lives.

Arguably the Send trait could be modified to mean “object that can be moved between a thread or task boundary“, but doing so would break code that relies on !Send for FFI-related purposes, where threads are important, such as with OpenGL or GTK.

Flow control is hard

Many asynchronous programs, including Substrate, are designed around what we generally call an actor model. In this design, tasks run in parallel in the background and exchange messages between each other. When there is nothing to do, these tasks go to sleep. I would argue that this is how the asynchronous Rust ecosystem encourages you to design your program.

If task A continuously sends messages to task B using an unbounded channel, and task B is slower to process these messages than task A sends them, the number of items in the channel grows forever, and you effectively have a memory leak.

To avoid this situation, it might be tempting to instead use a bounded channel. If task B is slower than task A, the buffer in the channel will fill up, and once full, task A will slow down, as it has to wait for a new slot before it can proceed. This mechanism is called back-pressure. However, if task B also sends messages towards task A, intentionally or not, using two bounded channel in opposite directions will lead to a deadlock if they are both full.

More complicated: if task A sends messages to task B, task B sends messages to task C, and task C sends messages to task A, all with bounded channels, the channels can also all fill up and deadlock. Detecting this kind of problem is almost impossible, and the only way to solve it is to have a proper code architecture that is aware of that concern.

The worst part with this bounded-channels-related deadlock is that it might not happen at first, and might reveal itself only when some unrelated code change modifies the CPU profile of tasks. For example, task B is usually faster than task A, but then someone adds some feature that makes task A faster, revealing the deadlock and suddenly making their job a nightmare.

This problem isn’t specific to Rust. It’s a fundamental problem with networking and actor models in general. What I would like to highlight, however, is that Rust gives the unfortunate illusion that writing an asynchronous program is easy while it is very much not.

One of the fundamental strengths of the philosophy of Rust, to me, is that you can ask a beginner to write some code, and the worst that can happen is that it panics or doesn’t work (contrary to C/C++, where you might give control of your computer to an attacker). It is possible that a beginner accidentally introduces a mutex-based deadlock, but these kind of deadlocks are generally local and can usually be avoided by removing mutexes or doing some small code reorganization. They have proven to be a non-issue in practice.

Writing proper flow control, however, is a real issue and is very much non-beginner-friendly. A lot of energy has in fact been spent over the last couple of weeks in the Polkadot code base debugging deadlocks caused by improper flow control. They were introduced either by programmers who weren’t deeply familiar with that kind of questions, or, and this is maybe even more problematic, accidentally after refactoring code. The famous promise of fearless refactoring isn’t really upheld here.

As a pragmatic solution, we have introduced in Substrate a “monitored unbounded channel”. The number of items that go in and out of each channel is reported to a Prometheus client, and an alert is triggered if the difference between the two goes above a certain threshold. Bounded channels are also in use, but only in places where flow control is actually important (a.k.a. networking). This has proven to be a pragmatic approach that seems to work.

I think that the situation would be greatly improved if the ecosystem provided helpers for asynchronous code patterns that are known to be sound, such as a background task that receives messages and sends back responses, or tokio’s broadcast channel. After alternatives exist for all reasonable use cases, a warning could be added to regular bounded channels.

“Just spawn a new task”

As explained in the “Future cancellation problem” section, the most straight-forward solution to cancellation problems is often to spawn an additional background task. In particular, when you have more than 2 or 3 futures to poll in parallel, spawning additional background tasks tends to solve all problems.

When you have background tasks, sharing data between these various tasks is done either through channels or with Arcs, with or without a Mutex. This tends to lead towards what can be called an Arc-ification of the code. Instead of putting objects on the stack, everything is wrapped within an Arc and passed around.

There is nothing wrong per se in putting everything in an Arc. The problem is that… it almost doesn’t feel like you’re writing Rust anymore.

The same language that encourages you to pass data by references, for instance &str instead of String, also encourages you to split up your code into small tasks that have to clone every piece of data they send to one another. The same language that provides a complex lifetimes system to avoid the need for a garbage collector now also encourages you to put everything in an Arc.

According to the idea of zero-cost abstractions, the programmer should split code into tasks only when they want these tasks to run in parallel, whereas the general feeling of asynchronous Rust leans towards spawning hundreds or thousands of tasks, even when it is known ahead of time that the logic of the program will not allow some of these tasks to run at the same time.

I don’t think that this is really a problem, but I do feel like there are now two languages into one: a lower-level language for CPU-only operations, that uses references and precisely tracks ownership of everything, and a higher-level language for I/O, that solves every problem by cloning data or putting it in Arcs.

Program introspection

An unfortunate consequence of switching everything from synchronous to asynchronous, and that we didn’t anticipate enough when writing Substrate, is that every single debugging tool in the Unix world assumes threads.

Do you want to find the cause of a memory leak? There are tools that will show you how much memory the code in each thread has allocated and free’d. Do you want to know how busy some piece of code is? There are tools that will show you how much CPU each thread uses over time. All these tools are great, but they are useless when your code jumps from thread to thread all the time.

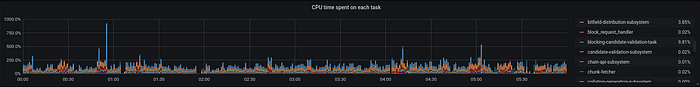

Similar to the “monitored unbounded channel” I mentioned earlier, we decided to wrap every long-running task within a wrapper that reports to a Prometheus client how many times a task has been polled, and for how long.

This turned out to be more useful than initially thought. Beyond the CPU usage, it also lets us detect for example when a long-running task hasn’t been polled for a long time (e.g. because it has deadlocked), or when a task has started being polled but never ended (e.g. it’s stuck in an infinite loop).

It is important to note that this kind of measurement is realistic because the user space has control over when and how tasks are executed. While I’m lacking experience here, I imagine that it would be far from being this easy in a synchronous world. A win for async Rust here.

tokio vs async-std vs no-std

I’ve left this topic for the end.

There have been a lot of discussions about the ecosystem split between the tokio library and the async-std library. Each of these two libraries define their own types and traits, and as an application writer or even as a library writer you have to choose between the two. Libp2p, for example, has both TcpConfig and TokioTcpConfig.

The technical difference between tokio and async-std comes from a trade-off: tokio uses the same threads to poll the kernel for events and to run the asynchronous tasks, at the price of some thread-local-based dark magic. Async-std, on the other hand, spawns separate threads to poll the kernel, thus making it slower.

While tokio has the speed advantage, its main drawback is that polling a tokio socket or timer only works within a tokio executor (otherwise, it panics). This adds some implicit relationship between the code that polls sockets or runs timers and the code that executes tasks, even though these two pieces of code are typically miles away (maybe not even in the same repository).

This turned out to a very real issue at the time of the ecosystem transition between futures 0.1 and futures 0.3. Things were very messy, and some libraries had already transitioned while some others had not. When your code is several hundreds of thousands of lines of code, as it is in Substrate, the requirement that tokio sockets and timers must be polled within an executor of the same version of tokio was almost impossible to guarantee, and we ran anywhere between one and three separate event loops during this period. We switched from tokio to async-std because it avoided the entire problem by design, but in the present day we still run a tokio 0.1 events loop in parallel because of a legacy library.

In order to avoid an ecosystem split, it has been suggested in the past that asynchronous-friendly libraries should abstract over an implementation of the AsyncRead/AsyncWrite traits, thus giving the possibility to the user to plug either tokio or async-std at their convenience. The problem with that is that tokio and async-std don’t even agree on a definition of these two traits.

Even assuming that the two libraries used the same trait definitions, there is still a fundamental problem that nobody seems to actually notice: AsyncRead and AsyncWrite aren’t no-std-compatible, as they use std::io::Error in their APIs. Any library using these traits can‘t compile for no_std platforms.

Using std::io::Error in one’s API almost always leads to the API being under-specified, and this is also the case for these two traits. When we implemented the AsyncRead and AsyncWrite traits on libp2p substreams, we had to solve some very pragmatic questions, for example: if poll_write returns an error, do you still have to call poll_close? If poll_read returns an error, will it panic if you try to call it again later on the same object, like futures do? In order to make sure that our implementations of AsyncRead and AsyncWrite were “compliant”, we had to read the source code of the various async-std and tokio combinators in order to get an idea of how they were calling these traits’ methods.

I do believe that abstracting over AsyncRead and AsyncWrite as they are isn’t a good idea, because of this pesky std::io::Error and its blurriness.

So how do we solve this? The smoldot library, a Parity project I’m leading, uses a more “rough” API. Rather than using traits, we directly pass buffers of data that the function can read and fill (apologize for the lack of documentation). This might look like a step back to some people, but it is in my opinion the most flexible approach. Why do I think so? Because it doesn’t use any abstraction. This API is harder to use, but hard problems come with hard solutions.

Conclusion

That’s already a lot. I didn’t go into a lot of small details, such as how much I would like to have an InfiniteStream trait, how rustfmt isn’t capable of formatting code within select!, or how there isn’t any no-std-friendly asynchronous Mutex anywhere in the ecosystem, as I imagine that this will all be covered by other people.

I preferred to focus on issues that one encounters when scaling things up, as I imagine that in the current state of things many people have tried small asynchronous projects but not many people have worked on large-scale ones.

Despite all the negative aspects, I must say that I do generally really like the poll-based approach that Rust is taking. Most of the problems encountered are encountered not because of mistakes, but because no other language really has pushed this principle this far. Programming language design is first and foremost an “artistic” activity, not a technical one, and anticipating the consequences of design choices is almost impossible.

I’m glad that Rust is actually pushing the boundaries of language design and iterating over it, rather than just watching. I’m sure that all the warts in the design do have a solution, and I do see a bright future (harr harr) for this feature.

Thanks for reading.